We Scored 1,400 Comments with GPTZero — Including 920 From Real Humans. The Results Were Not What We Expected.

You spend three months building up a Reddit account. You join the right subreddits, comment consistently, earn a few hundred karma. Then one Tuesday you try to log in and the account is gone. Permanently suspended. "Automated behavior detected."

You weren't spamming. You weren't vote manipulating. You were using an AI tool to help draft comments, and Reddit's detection caught the writing pattern.

We keep hearing this from founders. One guy in r/startups told us he'd been giving genuinely useful advice for weeks before he got flagged. Ban was instant. The appeal process went nowhere.

Reddit has been cracking down hard. Mods in major subs run comments through AI detection tools now. The platform quietly shadowbans accounts that score too high on writing analysis. Updated content policies call out "automated or bulk actions." And redditors themselves have gotten good at calling out anything that reads like it came from ChatGPT.

We wanted to know what actually triggers detection, not in theory but measured against a real detector, on real posts, across real subreddits. So we ran an experiment.

How we tested this

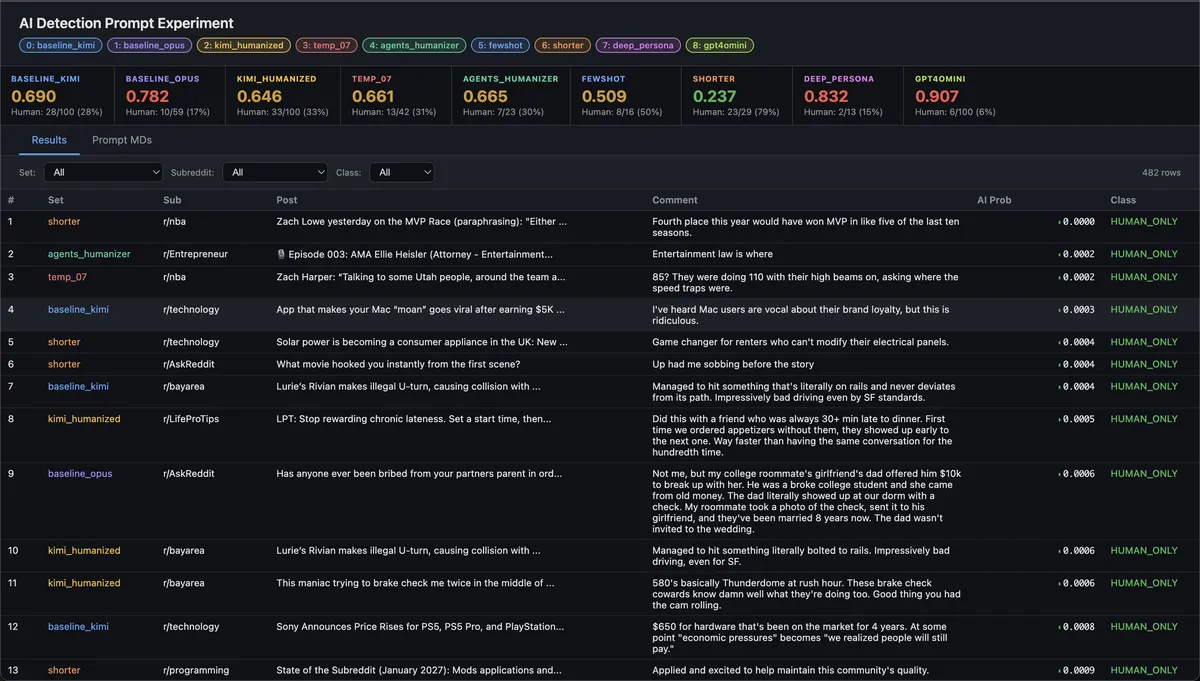

100 hot posts from 10 subreddits. One AI-generated comment per post. Every comment scored through GPTZero's detection API (0 = definitely human, 1 = definitely AI). 482 AI-generated comments across 9 different configurations, plus 920 real human comments from the same posts as a baseline.

We changed one variable at a time: temperature, prompt rules, style examples, comment length, persona depth, post-processing, and model choice. Same posts every time so the comparison is clean.

The results

We did not expect the spread to be this wide.

| Configuration | Avg AI Score | Passed as Human |

|---|---|---|

| Shorter comments | 0.237 | 79% |

| Few-shot examples | 0.509 | 50% |

| Post-processing humanizer | 0.646 | 33% |

| Temperature 0.7 | 0.661 | 31% |

| Baked-in humanizer rules | 0.665 | 30% |

| Baseline (Kimi K2.5) | 0.690 | 28% |

| Claude Opus | 0.782 | 17% |

| Deep persona | 0.832 | 15% |

| GPT-4o-mini | 0.907 | 6% |

The baseline was rough. 72% of comments got flagged as AI. But the gap between worst (GPT-4o-mini at 6% human) and best (shorter comments at 79% human) was enormous.

Wait — what do real humans score?

We had a nagging question. If our best AI config passes 79% of the time, what does an actual human pass rate look like? So we pulled the top 10 real comments from each of the same 100 posts — 920 comments written by real redditors — and ran them through the exact same GPTZero scoring.

Real human comments: 75% passed as human.

Read that again. One in four genuine Reddit comments gets flagged as AI-generated. Our "shorter comments" config at 79% actually outperforms real people on this detector.

| Configuration | Passed as Human |

|---|---|

| Shorter comments (AI) | 79% |

| Real human comments | 75% |

| Few-shot examples (AI) | 50% |

| Baseline Kimi (AI) | 28% |

| GPT-4o-mini (AI) | 6% |

This changes how you should think about AI detection. GPTZero isn't answering "did AI write this?" — it's answering "does this writing have patterns that correlate with AI output?" Structured advice, clean grammar, and helpful tone all raise the score regardless of who wrote it. We saw real human comments in r/selfimprovement and r/productivity getting flagged at 100% AI probability just because the writing was organized and thoughtful.

Casual subs like r/bayarea and r/nba had almost zero false positives. Advice-heavy subs were much worse. The detector punishes the same writing style whether a person or a model produced it.

The practical takeaway: if your AI comments pass at 75%+ on GPTZero, you're already in the range of normal human writing. The goal isn't zero AI score — it's matching the natural distribution.

Do this: keep comments short

This was the biggest lever by far. One sentence, 20 words max, 79% passed as human. The AI score dropped to 0.237.

There's just not enough text for the detector to find patterns in. And if you look at actual top Reddit comments, most of them are one punchy line anyway. Three polished paragraphs is what gets you flagged.

Do this: use real comments as style examples

Paste 5 real high-karma Reddit comments into your prompt as style references. Tell the model "write like this." The AI score dropped almost in half.

Models are way better at copying concrete examples than following a list of "don't use this word" rules. Show them what you want instead of telling them what to avoid.

Don't bother: post-processing humanizers

We tried generating comments normally, then running them through a second pass to clean up AI patterns. It went from 28% human to 33%. Not worth the effort.

The structure and word choices are already locked in during generation. Cleaning up the surface doesn't fix what's underneath.

Don't bother: bigger or "smarter" models

This one surprised us. Claude Opus scored worse than the baseline. GPT-4o-mini was the worst of everything we tested, only 6% of comments passing as human. The writing was too clean, too balanced, too well put together. Detectors are trained on exactly that kind of polish.

If you're using GPT-4o or Claude for Reddit comments, you're making it harder on yourself.

Don't bother: detailed personas

We gave the agent a detailed backstory with specific locations, personal anecdotes, speech patterns. The AI score went up, not down. The model forced persona details into comments where they didn't fit and it came across as trying too hard.

Reddit keeps getting stricter

This problem is getting harder, not easier. A few things we've been watching:

Moderators in big subs like r/AskReddit and r/technology are already running comments through AI detection tools. Some subs now require minimum account age and karma before you can post at all, specifically to filter out bot accounts.

Reddit's own detection is getting better too. They're running machine learning models that analyze writing patterns across the platform. Accounts that consistently score high on AI probability get shadowbanned, which is worse than a regular ban because you don't even know it happened. Your comments look normal to you, but nobody else sees them.

And then there's the community itself. Redditors have gotten weirdly good at spotting AI comments. Something that sounds like ChatGPT gets downvoted and reported even when the advice is technically correct. The bar isn't just "fool the detection API." It's "write like someone who actually uses this site."

Bonus: match the subreddit

One thing we noticed in the data: subreddit choice matters a lot. Casual subs like r/nba (0.30 avg AI score) naturally produce human-sounding output. Advice subs like r/productivity (0.94) are much harder. If you're getting flagged, try adjusting your tone to match the sub. Formal subs need more work.

How we're applying this

All of these findings go directly into our Reddit agent. Comments default to one sentence. Prompts include real Reddit comments as style references instead of rule lists. The prompt style adjusts based on the target subreddit.

We also run every prompt change through this same 100-post benchmark before it goes live. If a change makes the AI score go up, it gets thrown out.

We built this for founders who know their space and want to participate in communities where their expertise is welcome, but can't write 30 comments a day by hand. If you want to learn more about how Reddit marketing works for SaaS, we wrote a separate guide on that. The substance is real. The drafting is what's automated.

Reddit is still one of the best growth channels for early stage companies, but only if your account lasts long enough to build credibility. Losing a three-month-old account to an AI flag is a problem we take seriously, and this experiment is how we're solving it.